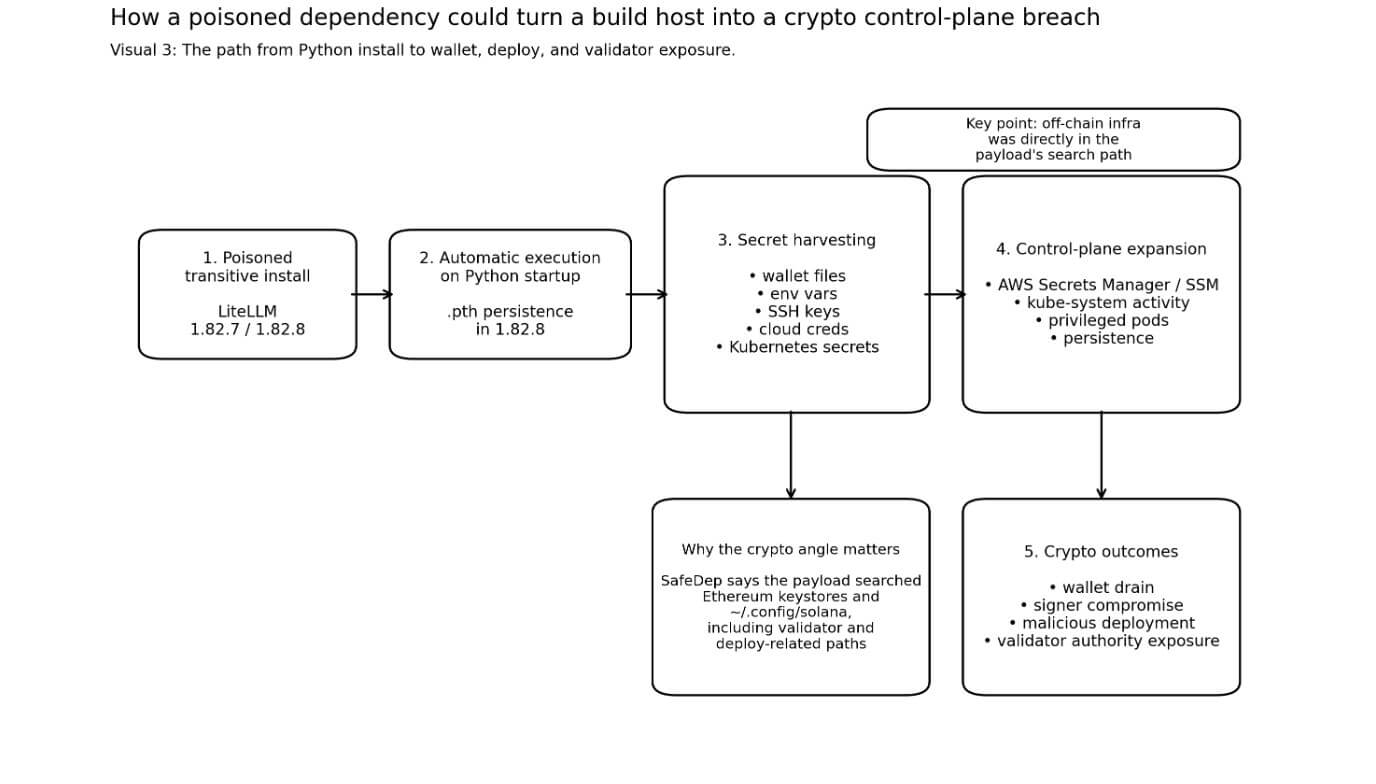

A poisoned release of LiteLLM turned a routine Python installation into a crypto-aware secret stealer that scanned for wallets, Solana validator material, and cloud credentials every time Python started.

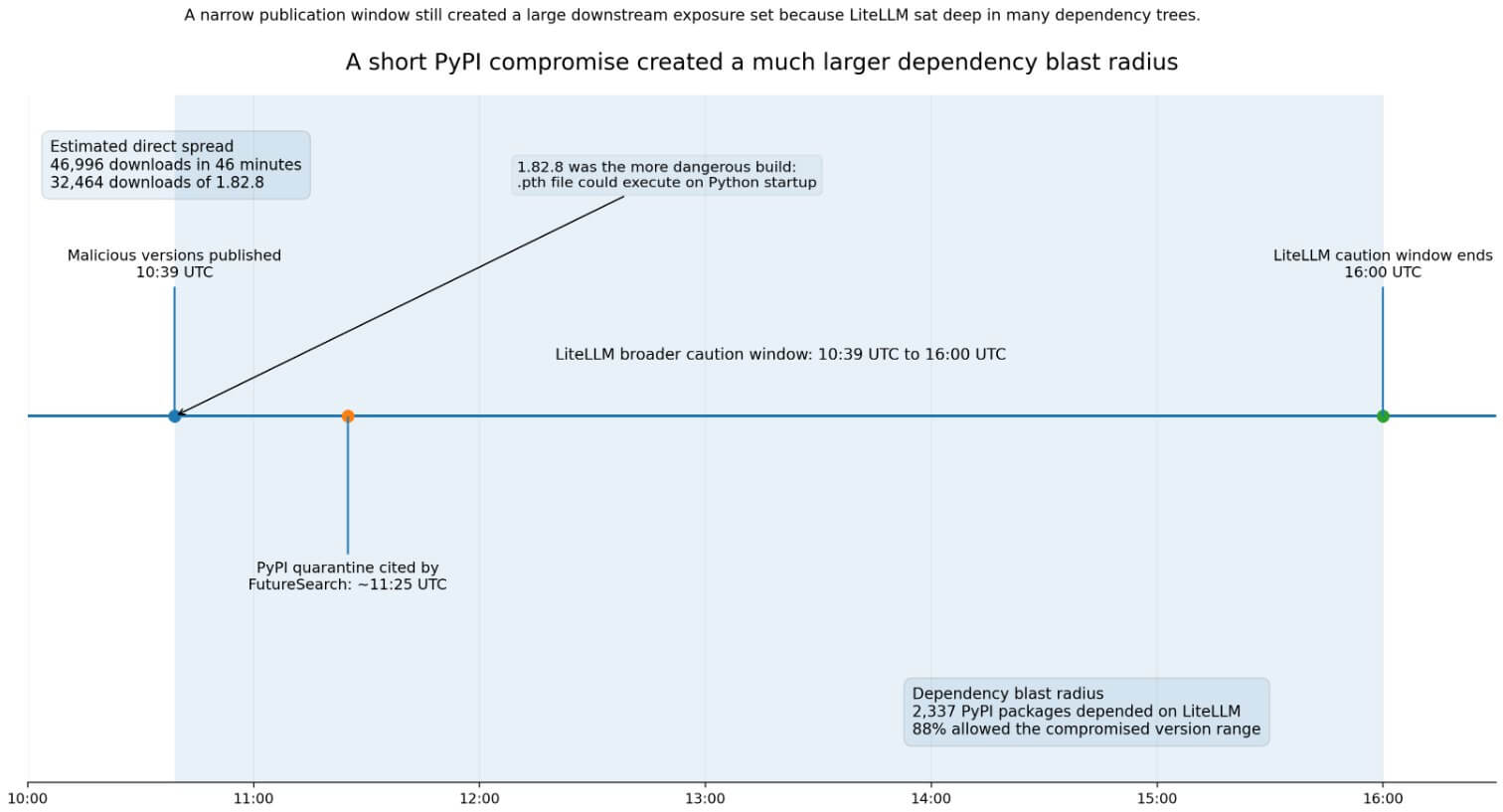

On March 24, between 10:39 UTC and 16:00 UTC, an attacker who gained access to an administrator account published two malicious versions of LiteLLM on PyPI: 1.82.7 and 1.82.8.

LiteLLM markets itself as a unified interface for more than 100 major language model providers, a position that by design places it in credential-rich developer environments. PyPI Stats records 96,083,740 downloads in the past month alone.

The two builds presented different levels of risk. Version 1.82.7 required a direct import of litellm.proxy to activate the payload, while version 1.82.8 placed a .pth file (litellm_init.pth) into the Python installation.

Python’s own documentation confirms that executable lines in .pth files are executed on every Python startup, so 1.82.8 runs without any imports. Every machine it was installed on was running compromised code the next time Python was launched.

FutureSearch estimates 46,996 downloads in 46 minutesof which 1.82.8 represented 32,464 of them.

Additionally, it counted 2,337 PyPI packages dependent on LiteLLM, with 88% allowing the compromised version range at the time of the attack.

LiteLLM’s own incident page warned that anyone whose dependency tree brought in LiteLLM via a loosened transitive constraint during the period should consider their environment as potentially exposed.

The DSPy team confirmed that it had a LiteLLM limitation of “superior or equal to 1.64.0” and warned that new installations during the period could have converted the poisoned builds.

Built to hunt crypto

SafeDep’s reverse engineering of the payload makes the crypto targeting explicit.

The malware looked for Bitcoin wallet configuration files and wallet*.dat files, Ethereum keystore directories, and Solana configuration files under ~/.config/solana.

SafeDep says the collector has given Solana special treatment, showing targeted searches for validator key pairs, voting account keys, and Anchor deployment directories.

The Solana developer documentation sets the default CLI key pair path to ~/.config/solana/id.json. Anza’s validator documentation describes three authority files that are central to the validator’s operation, and states that theft of the authorized withdrawr gives an attacker complete control over validator operations and rewards.

Anza also warns that the recording key should never be on the validator itself.

SafeDep says the payload collected SSH keys, environment variables, cloud credentials, and Kubernetes secrets in namespaces. When it found valid AWS credentials, it asked AWS Secrets Manager and the SSM Parameter Store for additional information.

It also created privileged node-setup-*pods in kube-system and installed persistence via sysmon.py and a systemd unit.

For crypto teams, compound risk runs in a specific direction. An infostealer that collects a wallet file in addition to the passphrase, deployment secret, CI token, or cluster credentials from the same host can turn a login incident into a wallet drain, a malicious contract deployment, or a signer compromise.

The malware collected exactly that combination of artifacts.

| Targeted artifact | Sample path/file | Why it matters | Possible consequence |

|---|---|---|---|

| Bitcoin wallet files | wallet*.datwallet configuration files |

Can expose wallet material | Risk of wallet theft |

| Ethereum key store | ~/.ethereum/keystore |

Can release signing material if combined with other secrets | Signatory Compromise/Abuse of Implementation |

| Solana CLI key pair | ~/.config/solana/id.json |

Default keypath for developers | Portfolio or deploy authority exposure |

| Solana validator authority files | validator key pair, voting account keys, authorized revocation | Central to validator activities and rewards | Compromise of validator authority |

| Anchor deployment directories | Anchor-related deployment files | Can expose deployment workflow secrets | Malicious contract implementation |

| SSH keys | ~/.ssh/* |

Opens access to repos, servers, bastions | Lateral movement |

| Cloud credentials | AWS/GCP/Azure environment or configuration | Extends access beyond the local host | Access to secret stores / infrastructure takeover |

| Kubernetes secrets | cluster-wide secret harvest | Opens the control plane and workloads | Namespace compromise/lateral spreading |

This attack is part of a broader campaign, just like that of LiteLLM incident note links the compromise to the earlier Trivy incident, and Data hound And Snyk both describe LiteLLM as a later stage in a multi-day TeamPCP chain that moved through several developer ecosystems before reaching PyPI.

The targeting logic runs consistently throughout the campaign: a secretive infrastructure tooling provides faster access to wallet-adjacent material.

Possible outcomes for this episode

The bull case rests on the speed of detection and the absence, thus far, of publicly confirmed crypto theft.

PyPI quarantined both versions on March 24 around 11:25 UTC. LiteLLM removed the malicious builds, switched the administrator credentials, and enabled Mandiant. PyPI currently shows 1.82.6 as the last visible release.

If defenders were to rotate secrets, check for litellm_init.pth, and treat exposed hosts as burned before adversaries could turn exfiltrated artifacts into active exploitation, the damage would be limited to exposing credentials.

The incident also accelerates the adoption of practices that are already gaining ground. PyPI’s Trusted Publishing replaces short-lived manual API tokens with an ephemeral, OIDC-backed identity; by November 2025, approximately 45,000 projects had adopted this.

LiteLLM’s incident involved misuse of clearance data, which made it much more difficult to dismiss the case for switching.

For crypto teams, the incident creates the urgency for tighter role separation: cold validator revokes kept completely offline, isolated deployment signers, ephemeral cloud credentials, and locked dependency graphs.

The DSPy team’s quick pinning and LiteLLM’s own post-incident guidance both point toward hermetic constructs as the recovery standard.

The bear case turns on delay. SafeDep documented a payload that managed to extract secrets, propagate within Kubernetes clusters, and install persistence before detection.

An operator who installed a poisoned dependency in a build runner or cluster-connected environment on March 24 may not discover the full extent of that exposure for weeks. Exfiltrated API keys, deployment credentials, and wallet files do not expire upon detection. Opponents can hold them and take action later.

Sonatype estimates malicious availability at “at least two hours”; LiteLLM’s own guidelines include installations up to 16:00 UTC; and FutureSearch’s quarantine timestamp is 11:25 UTC.

Teams cannot rely solely on timestamp filters to determine their exposure, as these numbers do not provide a clear, catch-all result.

The most dangerous scenario in this category concerns shared operator environments. A crypto exchange, validator operator, bridge team, or RPC provider that installed a poisoned transitive dependency in a build runner would have exposed an entire control plane.

Kubernetes secret dumps across namespaces and creating privileged pods in the kube system namespace are control plane access tools designed for lateral movement.

If that lateral movement were to reach an environment where hot or semi-hot validator material was present on accessible machines, the consequences could range from individual credential theft to compromising validator authority.

PyPI’s quarantine and LiteLLM’s incident response closed the active distribution window.

Teams that installed or upgraded LiteLLM on March 24, or that ran builds with unpinned transitive dependencies converted to 1.82.7 or 1.82.8, should consider their environments fully compromised.

Some actions include rotating all secrets accessible from exposed machines, checking for litellm_init.pth, revoking and re-issuing cloud credentials, and verifying that no validator authority material was accessible from those hosts.

The LiteLLM incident documents the path of an attacker who knew exactly which off-chain files to look for, had a delivery mechanism with tens of millions of monthly downloads, and built persistence before anyone pulled the builds from distribution.

The off-chain machinery that moves and secures crypto was directly in the payload’s search path.