Ethereum co-founder Vitalik Buterin is calling on social media platforms to use cryptography and blockchain tools to make their content ranking systems more transparent and verifiable.

In a Monday post, Buterin argued that X should use zero-knowledge proofs (ZK-proofs) and blockchain to prove the fairness of the algorithm that determines the reach of content on the platform. He raised an issue with the way X operates on December 9, claiming that the way owner Elon Musk runs the company is harmful:

“Elon Musk, I think you should consider that making

Ethereum Foundation AI leader Davide Crapis responded to this original idea by saying, “If you want to claim that X is the platform for free speech, you need to disclose the optimization goals of your algorithm.” He added that “it should be readable and customizable for the users.”

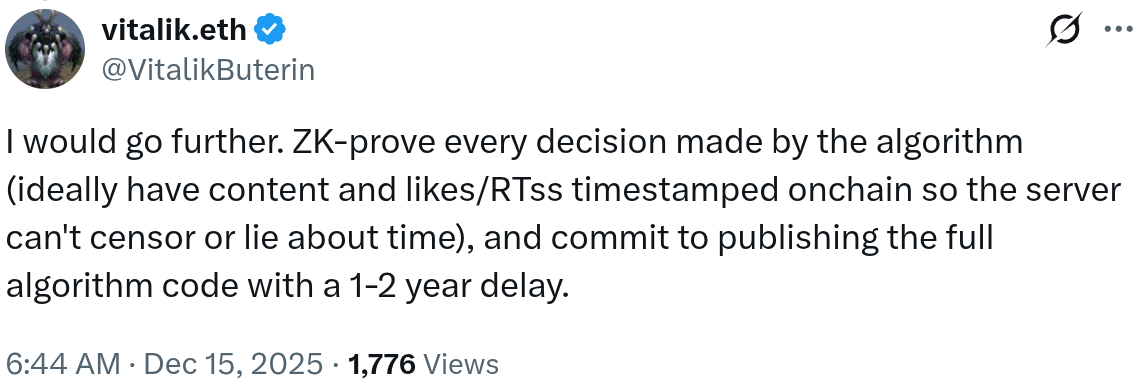

Buterin proposed a verifiable system that uses ZK proofs for every decision made by the algorithm and that timestamps all content, likes and retweets on a blockchain “so the server can’t censor or lie about the time.” The platform must also commit to publishing the full algorithm code with a delay of one to two years.

Source: Vitalik Buterine

ZK proofs are a cryptographic way to prove something is true without revealing the underlying data. For example, you can prove that you are over 18 without sharing your full name. Buterin didn’t go into detail about what evidence would show in his proposed solution, but they would likely show that algorithmic decisions followed certain constraints without sharing sensitive details.

Related: Sunlight is more effective than censorship

Crypto is taking over social media

Buterin’s post reflected the sentiment behind some decentralized social media platforms known as SocialFi. Such initiatives, despite the fact that none have gone mainstream, appear to be taken seriously by their traditional centralized equivalents.

In early 2025, Meta, the parent company of Facebook and Instagram, blocked links to a decentralized Instagram competitor called Pixelfed. All links to the platform were labeled as ‘spam’ and immediately removed. Others claimed that Facebook competitors, including Mastodon, received the same treatment.

The crypto community – with its tendency to be wary of centralized control – has raised concerns about the potential impact of past decisions made by the leaders of social media platforms. When Musk announced in early January that X would prioritize promoting content considered informative or educational over other types of content, many were unconvinced.

Critics wondered who would decide what qualifies and whether the policy could become a tool to suppress certain views. Musk has also been accused by critics of limiting access to premium features for users who disagreed with him.

Buterin also participated at the time. He urged Musk to remain committed to freedom of expression on the platform and not ban users for disagreeing or expressing views.

Related: Buterin says X’s new location feature is “risky” as crypto users flag privacy concerns

The impact of social media on society

Research has long shown that social media has an outsized impact on society and the functioning of democratic processes. An article published in 2024 suggested that “access to Facebook may increase belief in misinformation.”

Reuters also reported last month that recent court filings suggested Meta had halted internal research into Facebook’s mental health effects after finding causal evidence that its products were harming users’ mental health. The study found that “people who didn’t use Facebook for a week reported fewer feelings of depression, anxiety, loneliness and social comparison.”

The European Union has attempted to address this problem with its Digital Services Act, which requires transparency on key algorithm parameters and requires platforms to assess risk and make public findings on the potential negative impact of their activities. Effects explicitly considered include “negative effects on civil discourse and electoral processes, and on public safety.”

The Digital Services Act also requires vetted researchers to access platform data to independently study their systemic risk. X’s lack of compliance with this specific requirement is one of the reasons given by the European Commission for imposing a fine of 120 million euros earlier this month.

Other reasons include a lack of transparency in